NVIDIA’s Spectrum-X Ethernet With MRC Redefines AI Networking: OpenAI, Microsoft, Oracle Already Deploying

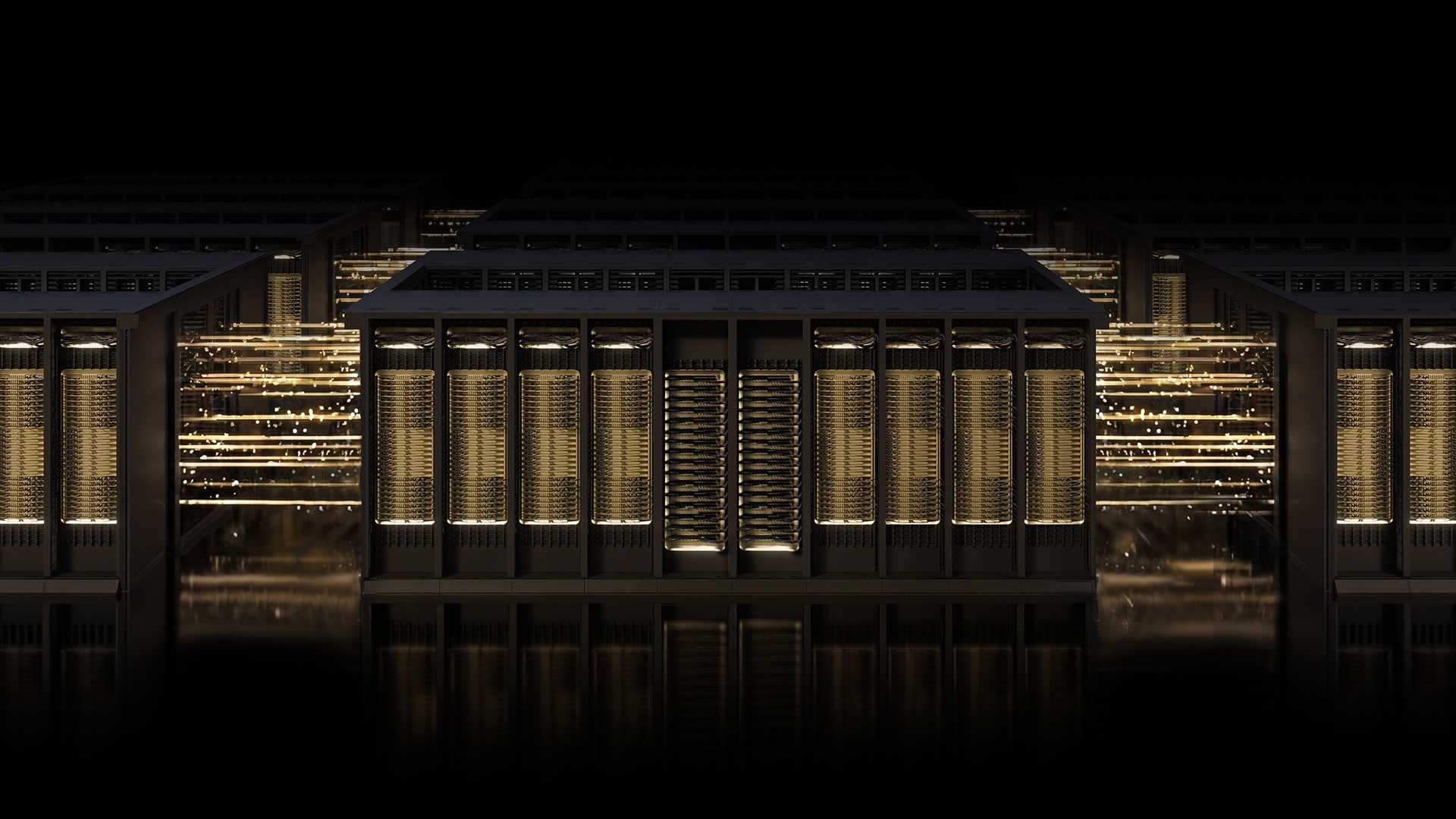

NVIDIA today announced that its Spectrum-X Ethernet platform, now equipped with the Multipath Reliable Connection (MRC) protocol, is rapidly becoming the backbone of the world’s largest AI factories. The open specification, contributed to the Open Compute Project, has already been deployed by OpenAI, Microsoft, and Oracle to power gigascale AI training runs—setting a new industry benchmark for performance and reliability.

“Deploying MRC in the Blackwell generation was very successful and made possible by a strong collaboration with NVIDIA,” said Sachin Katti, head of industrial compute at OpenAI. “MRC’s end-to-end approach enabled us to avoid much of the typical network-related slowdowns and interruptions and maintain the efficiency of frontier training runs at scale.”

MRC is an RDMA transport protocol that allows a single connection to spread traffic across multiple network paths, improving throughput, load balancing, and availability. Think of replacing a single-lane road with an intelligent grid system that reroutes cars around traffic jams in real time—that is the leap MRC delivers for AI data centers.

Background

Building large-scale AI models requires networking that can handle unprecedented data volumes without bottlenecks. Traditional Ethernet fabrics often suffer from packet loss and congestion, which stalls GPU utilization and slows training. NVIDIA’s Spectrum-X was purpose-built to solve these challenges, combining hardware designed for AI workloads with advanced telemetry and fabric control.

Microsoft’s Fairwater and Oracle’s Abilene data centers—two of the largest AI factories ever built—now rely on MRC over Spectrum-X Ethernet to meet the extreme performance and efficiency demands of frontier large language models. These deployments prove MRC works at massive scale, delivering high GPU utilization by balancing traffic across all available paths and dynamically avoiding overloaded routes.

What This Means

The open release of MRC means any organization can build AI networks that match the performance of the hyperscalers. By enabling intelligent retransmission and real-time congestion avoidance, MRC minimizes the impact of data loss on long-running jobs—dramatically reducing GPU idle time. Administrators also gain granular visibility into traffic flows, simplifying troubleshooting and operational management.

This development signals a shift from proprietary, closed networking solutions to a standardized, open approach that accelerates AI innovation. With MRC, NVIDIA has effectively raised the bar for what Ethernet can achieve in the AI era, making gigascale training more accessible and efficient. Industry leaders are already voting with their deployments, confirming that this protocol is not just theoretical but a proven, production-ready technology.

Related Articles

- The Hidden Advantages of Operating Two Wi-Fi Networks in Your Home

- OnePlus Pad 4 Launches with Snapdragon 8 Elite Gen 5 Amid Merger Uncertainties

- Revolutionizing LDAP Secrets Management: Vault Enterprise 2.0's New Approach

- Mastering LDAP Secrets Management with IBM Vault Enterprise 2.0: A Step-by-Step Guide

- How to Enable Windows 11's New Low Latency Profile for Smoother App Launching

- The Ten Pillars of 6G: Key Technologies Driving the Next Wireless Revolution

- Man Page Revolution: Developer Proposes Cheat Sheets and Categorized Options to Fix Doc Frustration

- Crafting Superior Man Pages: A Comprehensive Guide to Enhanced Documentation