Building a Multi-Agent System for Intelligent Ad Delivery: A Step-by-Step Guide

Overview

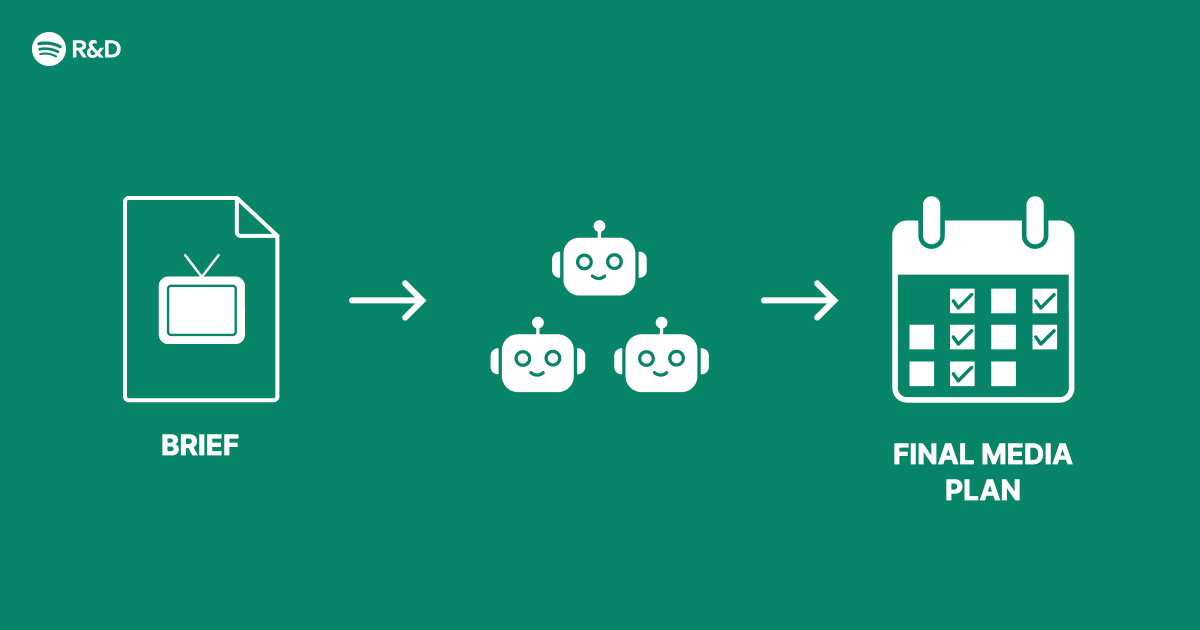

Modern advertising platforms face a structural challenge: balancing relevance, budget efficiency, and user experience across thousands of campaigns in real time. A single monolithic AI model often struggles to handle conflicting objectives and dynamic constraints. At Spotify Engineering, we encountered exactly this problem when scaling our ad system. Instead of shipping a one-size-fits-all AI feature, we rearchitected our ad decision engine around multi-agent systems—a collection of specialized AI agents that collaborate and negotiate to deliver smarter advertising outcomes. This tutorial walks you through the core concepts, prerequisites, and implementation steps for building such a system, complete with code examples and common pitfalls to avoid.

Prerequisites

Knowledge

- Familiarity with Python and basic machine learning concepts

- Understanding of reinforcement learning (RL) fundamentals (value functions, policies)

- Basic knowledge of message-passing or event-driven architectures

Tools & Libraries

- Python 3.8+

gym(for RL environment simulation)ray(for distributed agent execution)redisorrabbitmq(for inter-agent communication)tensorflow/pytorch(agent policy networks)

Infrastructure

- At least one server or cloud instance (for production, a Kubernetes cluster is recommended)

- Streaming data pipeline (e.g., Kafka) for ad events

Step-by-Step Instructions

1. Define Agent Roles and Objectives

In a multi-agent advertising system, each agent specialises in a subproblem. Typical roles include:

- Bidding Agent – decides optimal bid price per auction

- Budget Allocation Agent – distributes daily budget across campaigns

- Creative Selection Agent – picks the best ad creative for a user context

- Pacing Agent – modulates spend velocity to avoid early budget exhaustion

For each agent, define a clear reward function. For example, the Bidding Agent’s reward might be (conversion_value - bid_cost) while the Pacing Agent’s reward is a penalty for exceeding hourly spend limits.

# Example: BiddingAgent reward function

def bidding_reward(conversion, cost):

return conversion * value_per_conversion - cost

2. Design Inter-Agent Communication

Agents cannot operate in isolation. They must share state (e.g., remaining budget, current bid landscape) and negotiate decisions. We used a blackboard architecture where agents write and read from a shared Redis store.

- Use

redis-pyfor simple key-value writes. - For complex coordination, implement a contract-net protocol: an agent broadcasts a task, others bid on it, and the best bidder executes.

import redis

r = redis.Redis()

# BiddingAgent writes its current bid price

r.set('current_bid', 0.45)

# PacingAgent reads the bid to adjust pace

current_bid = float(r.get('current_bid'))

3. Train Agents with Multi-Agent Reinforcement Learning

We used a centralised training, decentralised execution (CTDE) framework. Each agent learns its own policy using PPO (Proximal Policy Optimization). The environment is a custom ad auction simulator.

import gym

from ray.rllib.agents.ppo import PPOTrainer

# Register custom ad environment

gym.register('AdAuction-v0', entry_point='ad_env:AdAuctionEnv')

trainer = PPOTrainer(

config={

'multiagent': {

'policies': {

'bidder': (None, obs_space, act_space, {}),

'pacer': (None, obs_space, act_space, {}),

},

'policy_mapping_fn': lambda agent_id: agent_id,

},

'num_workers': 4,

},

env='AdAuction-v0'

)

for i in range(100):

result = trainer.train()

print(f'Iteration {i}: reward={result["episode_reward_mean"]}')

4. Deploy Agents as Microservices

Each agent runs in its own container or separate process, communicating via gRPC or message queues. Use Ray Serve or FastAPI to expose inference endpoints.

# BiddingAgent service (FastAPI)

from fastapi import FastAPI

import uvicorn

app = FastAPI()

@app.post('/bid')

def get_bid(user_context: dict, campaign_id: str):

# load model and compute bid

bid = model.predict(user_context)

return {'bid_price': bid}

if __name__ == '__main__':

uvicorn.run(app, host='0.0.0.0', port=8000)

5. Implement Monitoring and Feedback Loops

Agents must continuously learn from live data. Stream ad outcomes (impressions, clicks, conversions) into a Kafka topic, and have a feedback agent update each agent’s experience buffer. Then periodically retrain models.

- Use Prometheus for metrics (e.g., average bid price, budget utilization).

- Log all agent decisions with unique IDs for debugging.

Common Mistakes

Mistake 1: No Shared State Coordination

Agents that don’t share budget or bid information will overshoot or underspend. Always implement a centralised state store (Redis) and enforce read-after-write consistency.

Mistake 2: Training Agents in Isolation

If agents are trained separately with static behavior of others, they won’t learn to cooperate. Use multi-agent training with concurrent environment steps.

Mistake 3: Overly Complex Communication Protocols

Don’t build a full-fledged negotiation system on day one. Start with simple broadcast+subscribe; add complexity only after validating core behavior.

Mistake 4: Neglecting Latency and Througput

Advertising decisions must be made in milliseconds. Cache frequent inferences, use async I/O, and precompute features.

Summary

Building a multi-agent architecture for advertising solves the structural problem of competing objectives by decoupling decision-making into specialized, collaborative agents. This guide covered the essential steps: defining agent roles, designing communication, training with multi-agent RL, deploying as microservices, and monitoring. By avoiding common pitfalls (isolated training, lack of coordination, excessive complexity), you can create a system that dynamically adapts to user behavior and campaign goals—just as we did at Spotify. The result is smarter, more efficient ad delivery without sacrificing user experience.

Related Articles

- The Right Way to Close Windows Applications: Stop Draining Your PC's Performance

- How to Transform System Tools from Chores into Desirable Experiences: A Step-by-Step Design Guide

- Top American Whiskeys of 2025: Blind Tasting Reveals Surprising Winners Under $70

- Malicious Ruby Gems and Go Modules Target CI/CD Pipelines in Sophisticated Supply Chain Attack

- Web Designers Urged to Foster Amiability: Lessons from 1930s Vienna Circle

- How to Unlock the True Potential of Dolby Atmos Without Buying New Gear

- 10 Ways System Tools Can Learn From Design Icons

- The Hidden Health Cost of Saying 'I'm Fine': How Women's Discomfort Normalization Leads to Crisis